|

|

|

|

|

|

|

|

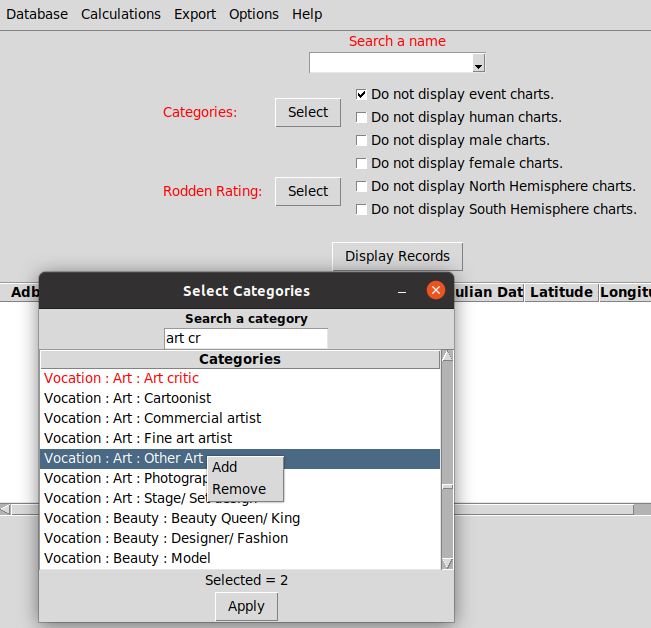

In this article we try to explain to astrology students how to use basic statistical techniques to analyse astrological data. The methods belong to the standard statistical tools, taught at colleges and universities to present and analyse data for social and natural science. They can also be used to evaluate astrological data, as we will show you with a study that compares the astrological profiles of Art Critics with the expected values found in the Astrodienst database (ADB).

The goal of this article is not to present readers a case for or against astrology. Astrology as a science does not exist and for astrology as a belief system the rule ask one Jew, you'll get two opinions still applies. So you cannot simply test astrological claims, as there will always be astrologers who claim that the wrong test methods were used. This heterogeneity does not make astrology as such irrational, but it will make it difficult to test astrology empirically in an by astrologers or scientists accepted way. Statisticians and scientists are less interested in opinions. They want to present the data and analyze the data using standard methods that are based on proven mathematical formula's. What astrological findings are based on randomness and which outcomes could present a case for astrology?

Dane Rudhyar made some proposal to decipher this major astrological question in Statistical Astrology and Individuality, but his basic assumptions were rather naïve and speculative.

But Rudhyar, who criticized statistical testing as being too reductionistic, did not provide any objective tool to check his speculations about his “certainly not 100% accurate“ astrological prejudices. He ended his quest with circular reasoning:

For us, the simple goal was to find out what can astrologically be seen in the categories of the ADB database. Facts do matter and are more relevant to judges than the opinions of astrologers how things according their books or personal experience should be. Are there relevant astrological differences between ADB categories? Astrology books do assume them, but the only way to discover them is to simply compare ADB groups in a statistical way. For this reason we compared astrologically hundreds of ADB categories with the ADB as a whole. A list of them can be found here: ADB categories statistically evaluated.

The other goal of this article is to show our readers how easy it is to use statistical methods to do relevant astrological research. The tools are there, the time is right, and so the elemental astrological studies can and should be done.

We see no sound reasons for astrologers to postpone it.

Fifty years ago, researches needed access to expensive software like the Statistical Package for the Social Sciences (1968), but the basic statistical techniques can now be done by free open source software.

For centuries, astrologers used more or less precise ephemeris tables to calculate planet and house positions. But current astrology software calculates planet positions in milliseconds, presenting them in a pretty graphical way. Most astrology software can also do some research.

For centuries, astrological databases were unavailable. Astrology was explained by case studies, providing anecdotal evidence at best. Today, astrological databases are available on the internet, including plenty of biographical and other relevant data.

Actually, it should be a marvellous time to do predictive astrology again without relying on obscure and outdated astrological sources. As we have the tools and the data to identify the astrological risk factors for disease and disaster. Would you go back to the time when William Lilly presented his Christian astrology? Or the time when the disciples of Jesus presented their Gospels? Lilly, objective or not, became a hero for many astrologers. And Jesus, myth or not, became a God for the followers of his twelve apostles. But there are good reasons to doubt the validity of their mythical claims. For this reason new observations should be done, before maybe false gospels are endlessly repeated in time.

In the case of astrology, research can be redone using modern statistical techniques on the categories of the AstroDatabase (ADB). Of course, the study of astrology can still be done by doing private observations, but that would not make astrology a predictive science. It would stay a personal believe system, that somehow works for or appeals to some people, but has little predictive value for the rest.

The ADB was designed to enable astrological research, so it contains categories of groups that can be compared. See Help:Category - Astro-Databank for details.

The actual assignment of ADB entries to certain categories can often be disputed. Categories dealing with personality traits tend to be subjective, categories dealing with medical disease lack specificity. But what strikes most is the obvious underreporting in most categories: Why should only 53 ADB entries have an extraordinary talent for music in a database that contains at least famous 332 conductors, 1057 composers and 1976 instrumentalists?

The main reason for this obvious neglect is that the place, date and time of birth and especially the reliability of the birth time, have major priority of ADB editors. Here, and in the related concept of Rodden Rating they strive for scientific reliability and correct each other when needed, but the biography and categories are up to the preferences of the individual ADB editors. And because finding and documenting birth times is difficult enough, most ADB editors concentrate on this. Next, they add some categories that seem to be fitting to the record, some life events, and then they go on with the next record.

Only in exceptional cases, for instance when Louis Rodden described the faith of her family members or former ADB editor svi started his ADB rectification experiments, more attention to details were given in the ADB records. But most of the time ADB editors give only a short extract of facts as found in the Wikipedia. To copy and paste all of it to the ADB would be violation of the copyright. They assume that ADB users study the relevant Wikipedia entries to notice other biographical facts, including possible categories that could apply to the native.

So, if someone wants to study the astrology of anxiety disorder, looking only at the 48 cases mentioned in the ADB (0,1% of 50000) is a bad idea. As the sector Epidemiology of anxiety disorder of the Wikipedia suggests that there should be thousands of them (9 to 29% of the population).

This limitation of the ADB is not a public secret. In ADB Forums like Astro-Databank discussion, it has been explained. It is just a limitation dealing with priorities and lack of time of the ADB volunteers. Astrology researchers using the ADB categories should be aware of its limitations and try to correct for it, I read in 5.3. Data collection and submission of data to Astrodatabank:

Again, the priority of the ADB editors lies in the reliability of the found birth data. For this reason they prefer the often rounded Birth Certificate birth-time (Rodden rating AA), which can be imprecise, but is free from speculation. But other ADB claims tend to be hear say knowledge that come from several sources. So to do any serious research on ADB data the presented biographical data, presumed to be fitting categories and the many omissions in the tagged categories and personal data should also be checked, as one cannot expect that the few ADB editors have time to keep the large amount of biographical data updated. But of course the ADB editors did their best. And they had for sure no intention to deceive you by providing alternative facts. As they knew that facts can be checked. For this reason your and mine research of ADB categories like art critics can be fruitful. But it is important to know that in most categories not dealing with occupations, underreporting and thus systemic bias are rule.

AA times are the best Rodden Ratings to use in astrological research, as they are based on documented facts like the birth record, that can be checked. But few astrology students realise that a rounded 4 AM birth time could imply that the native could have a different ascendant, Moon sign and House Lords, when we see this AA time as having a imprecision of +/- 30 or even more minutes. And a shift in time of only 30 minutes would dramatically alter most predictive astrological techniques.

To deal with the rather imprecise given birth times of individuals, astrologers invented rectification techniques. But because those techniques can also be abused to explain wrong astrological theories afterwards with it, rectified times got a Rodden Rating of C (Caution, no source). So we only allowed them only in our control groups, where they could contribute their tiny part to the by statisticians and empirical scientists needed law of large numbers.

For statisticians studying astrology in large groups imprecise birth dates are a cause of extra noise in both their case and control groups. But statisticians studying the properties of large and small groups (samples) learned how to deal with noise in groups. As more or less imprecise measurements are always found. It causes more variation, especially in small groups. But estimations of the mean and likely variation around the found values can still be made.

But this on inherent uncertainty based error causes total unpredictability in groups consisting of one member, unless you study well-known matters like the theoretical question how many limbs a Sun in Aries had before he had his accidents. But the astrological assumption or as I assume astrological prejudices (bias) that people born with Sun in Aries are aggressive or are prone to accidents are “certainly not 100% accurate”.

Notwithstanding its limitations, the by Lois Rodden's initiated ADB database became a standard reference work most astrologers still do rely on. It is their best tool to demonstrate astrological principles when they strive for some objectivity. When astrologers always referred to their personal experience with anonymous private clients only, they would lose credibility. As that scientifically seen bad habit would make their unlikely claims impossible to check. So they prefer well known ADB entries to illustrate their point of view in astrology books, based on their personal experience over years with thousands of anonymous private clients and probably some hundreds astrology books. But makes this habit of paying selective attention you wise?

Astrologers seldom use case and control groups to do the kind of research that could give the astrological community answers to simple questions like: How more often are Sun in Taurus persons gardeners? What were the found and expected frequencies? Was the effect size large enough to be predictive in practice? Were the found differences statistically significant? Are the findings likely to be reproduced?

Without answering those basic questions, but only referring to private experiences and personal wisdom, astrology books will remain vague. To answer those questions, we did the work more bottom up. We did what David Cochrane called assumptionless research to avoid what the Wikipedia calls cherry-picking.

To accomplish this, we calculated of existing ADB Categories the found and the expected mean values of astrological parameters like planet in sign or house. That should be statistically seen all there is in the ADB. Then, we compared them using elementary statistical methods. For this, the so-called standard error had to be taken into account to answer the for predictive astrology important question: Are the found trends in the ADB categories likely to be reproduced? To answer those questions effect sizes and their confidence intervals had to be estimated. We developed some simple tools for this.

In

this particular study we compare the astrological properties of

persons in the ADB category art critics with all timed ADB

entries of persons. But the methods also apply to the many other by

us investigated ADB categories. You can retrieve them all in

zipformat here.

In

this particular study we compare the astrological properties of

persons in the ADB category art critics with all timed ADB

entries of persons. But the methods also apply to the many other by

us investigated ADB categories. You can retrieve them all in

zipformat here.

The ADB as a whole could be seen as a sample of the population of all astrological charts, though it is certainly not a representative one. So we needed to provide you information about its major statistical characteristics. Not all control groups were done yet, but the selection criteria of TkDbAstro enables you to do most of the needed research yourselves. So you should not blame us as the messengers, but the missed opportunities you could do this job for your self. As the ADB is still the best source of birth time information of more or less known people that astrologers can access.

The only problem was that you had to study a lot of charts to do some research. And because it is impossible to oversee all those charts without making use of statistics, we developed a free tool for it. The credit for the programming goes to the in Switzerland born, Turkish geologist Tanberk Celalettin Kutlu, with the ADB Forum nickname of dildeolupbiten.

The painstaking work of arranging the selected raw astrological data from the AstroDatabase (ADB) to more informative statistical data presented in spreadsheets can be done by TkAstroDb. This open source Python program reads and processes the categorical data of ADB in contingency tables that can be read by well-known spreadsheets like Microsoft Excel and LibreOffice. You can retrieve the most recent results of the ADB Research Group in ADB stats.

In this article the focus is on the how, why and what to do with the by TkAstroDb provided statistical ADB data, using the principles of elemental statistical data analysis. We think that the frequencies found in the cross tables should be relevant for any genuine astrology researcher. As are the elemental statistical calculations done by TkAstroDb on expected values, effect-size, chi-square values, binomial p-values and (experimental) Cohen's d value. But of course, you are free to ignore them or understand them in your alternative way. We just provided the raw data that statisticians would like to see. See for my comments about the alternative views also Statistiek en astrologie volgens Dane Rudhyar (Dutch).

The installation and use TkAstroDb is explained at: http://github.com/dildeolupbiten/TkAstroDb.

TkAstroDb is still in development, so it is wise to check the above link for newer versions under Help /Check for updates.

We observed the planet in sign and house positions of 79 persons categorised as Category:Vocation : Art : Art critic found in the extract of the Astrodienst database (ADB) of Nov, 28 2018 released at 23h09 or Adb_Version_181128_2309.

The inclusion criteria were: 1) Having the entry Vocation Art Critic in the ADB and 2) a Rodden rating of AA, A or B for the birth data.

The observed values of planet in sign and their standard deviations were:

|

Aries |

Taurus |

Gemini |

Cancer |

Leo |

Virgo |

Libra |

Scorpio |

Sag |

Cap |

Aqua |

Pisces |

SD |

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

Sun |

9 |

12 |

8 |

10 |

9 |

5 |

4 |

5 |

3 |

5 |

2 |

7 |

2,9 |

|

Moon |

6 |

9 |

5 |

4 |

9 |

8 |

8 |

2 |

7 |

8 |

9 |

4 |

2,3 |

|

Mercury |

9 |

8 |

11 |

6 |

10 |

4 |

3 |

7 |

4 |

3 |

5 |

9 |

2,7 |

|

Venus |

8 |

9 |

7 |

12 |

9 |

6 |

4 |

4 |

5 |

4 |

6 |

5 |

2,4 |

|

Mars |

10 |

6 |

4 |

9 |

4 |

11 |

5 |

8 |

6 |

8 |

3 |

5 |

2,5 |

|

Jupiter |

7 |

8 |

14 |

3 |

8 |

7 |

5 |

7 |

8 |

3 |

6 |

3 |

2,9 |

|

Saturn |

7 |

9 |

8 |

3 |

7 |

8 |

6 |

6 |

5 |

9 |

4 |

7 |

1,8 |

|

Uranus |

9 |

9 |

8 |

6 |

6 |

6 |

3 |

7 |

2 |

9 |

7 |

7 |

2,1 |

|

Neptune |

7 |

9 |

7 |

13 |

9 |

14 |

6 |

3 |

3 |

3 |

0 |

5 |

4,0 |

|

Pluto |

2 |

20 |

18 |

21 |

10 |

2 |

1 |

2 |

0 |

0 |

1 |

2 |

8,0 |

|

N Node |

9 |

11 |

4 |

4 |

6 |

4 |

5 |

9 |

4 |

6 |

5 |

12 |

2,8 |

|

Chiron |

14 |

15 |

2 |

5 |

5 |

2 |

5 |

1 |

1 |

4 |

11 |

14 |

5,2 |

|

Totals |

97 |

125 |

96 |

96 |

92 |

77 |

55 |

61 |

48 |

62 |

59 |

80 |

948 |

As you can see the observed difference between Sun in Taurus (12) and Sun in Aquarius (2) is quite large. Moon in Scorpio (2) scores low and Moon in Taurus (9) scores higher than expected. So there might be some astrological trends. Venus in cancer also scores 12, three times higher than the below average found values of Venus in Capricorn (4), Libra (4) and Scorpio (4). Of the personal planets Jupiter in Gemini (14) scores best.

An astrologer might comment: These findings do not surprise me, as Taureans love to be surrounded by beauty, but Aquarians behave more like a clochard. Aquarians do not attach to material things, but Taureans value them. These hindsight remarks sound reasonable. But would an astrologer be able to predict these tendencies before having access to the presented data? That is unlikely, as astrologers seldom publish any quantitative research.

Nevertheless, the qualitative explanation above could be a valid explanation for some interesting quantitative findings in this sample of art critics. Maybe other outcomes could be astrologically explained as well, so that some vague suggestions as found in astrology books, could become established facts found back in the ADB. But because of their initial vagueness (could instead of must), we would have not yet a case for astrology, as no clearly stated hypothesis was tested.

Statisticians would immediately notice the standard deviation (SD) presented in the last column. What does a SD of 2,9 imply for them? They would expect quite large deviations around the mean (79/12=6,6) with this small sample size. For them, knowing that the sample error plays a major role in producing variation, getting opposite results like Sun in Taurus (2) and Sun in Aquarius (12) could have happened just as well. Nevertheless, given the found data, they might predict that getting a Sun in Taurus would be more likely than getting a Sun in Aquarius with another sample of art critics. For the obvious reason that they have to adjust their chance models to the actual found data, not to the expected data. And they tend to change their views when the NULL hypothesis, predicting that the found values just reflected the expected variation found in a random ADB sample, would be rejected.

The positions of the house cusps and their standard deviations were:

|

Aries |

Taurus |

Gemini |

Cancer |

Leo |

Virgo |

Libra |

Scorpio |

Sag |

Cap |

Aqua |

Pisces |

SD |

|

|

Cusp 1 |

4 |

5 |

7 |

4 |

9 |

9 |

3 |

10 |

8 |

11 |

5 |

4 |

2,6 |

|

Cusp 2 |

7 |

4 |

9 |

6 |

5 |

8 |

9 |

2 |

10 |

3 |

12 |

4 |

3,0 |

|

Cusp 3 |

8 |

7 |

9 |

7 |

4 |

6 |

6 |

8 |

3 |

8 |

3 |

10 |

2,2 |

|

Cusp 4 |

8 |

10 |

9 |

8 |

6 |

4 |

6 |

6 |

8 |

4 |

5 |

5 |

1,9 |

|

Cusp 5 |

5 |

11 |

10 |

6 |

9 |

5 |

4 |

3 |

8 |

7 |

5 |

6 |

2,4 |

|

Cusp 6 |

7 |

5 |

15 |

10 |

2 |

6 |

6 |

3 |

4 |

8 |

8 |

5 |

3,3 |

|

Cusp 7 |

3 |

10 |

8 |

11 |

5 |

4 |

4 |

5 |

7 |

4 |

9 |

9 |

2,6 |

|

Cusp 8 |

9 |

2 |

10 |

3 |

12 |

4 |

7 |

4 |

9 |

6 |

5 |

8 |

3,0 |

|

Cusp 9 |

6 |

8 |

3 |

8 |

3 |

10 |

8 |

7 |

9 |

7 |

4 |

6 |

2,2 |

|

Cusp 10 |

6 |

6 |

8 |

4 |

5 |

5 |

8 |

10 |

9 |

8 |

6 |

4 |

1,9 |

|

Cusp 11 |

4 |

3 |

8 |

7 |

5 |

6 |

5 |

11 |

10 |

6 |

9 |

5 |

2,4 |

|

Cusp 12 |

6 |

3 |

4 |

8 |

8 |

5 |

7 |

5 |

15 |

10 |

2 |

6 |

3,3 |

The observed planets in Placidus houses and their standard deviations were:

|

H1 |

H2 |

H3 |

H4 |

H5 |

H6 |

H7 |

H8 |

H9 |

H10 |

H11 |

H12 |

SD |

|

|

Sun |

4 |

6 |

7 |

6 |

5 |

7 |

7 |

6 |

6 |

11 |

7 |

7 |

1,6 |

|

Moon |

10 |

8 |

8 |

6 |

4 |

10 |

5 |

7 |

10 |

2 |

5 |

4 |

2,6 |

|

Mercury |

5 |

4 |

6 |

7 |

7 |

7 |

4 |

9 |

8 |

10 |

5 |

7 |

1,8 |

|

Venus |

7 |

9 |

8 |

3 |

6 |

5 |

5 |

9 |

13 |

4 |

5 |

5 |

2,7 |

|

Mars |

6 |

6 |

3 |

2 |

7 |

7 |

11 |

12 |

6 |

5 |

10 |

4 |

3,0 |

|

Jupiter |

4 |

7 |

5 |

11 |

10 |

8 |

8 |

9 |

6 |

4 |

4 |

3 |

2,5 |

|

Saturn |

8 |

6 |

6 |

11 |

2 |

8 |

8 |

5 |

7 |

9 |

3 |

6 |

2,4 |

|

Uranus |

6 |

4 |

7 |

4 |

4 |

7 |

13 |

5 |

8 |

9 |

6 |

6 |

2,5 |

|

Neptune |

3 |

6 |

5 |

11 |

11 |

7 |

4 |

5 |

5 |

6 |

9 |

7 |

2,5 |

|

Pluto |

4 |

5 |

9 |

6 |

11 |

6 |

7 |

3 |

8 |

8 |

7 |

5 |

2,1 |

|

N Node |

6 |

10 |

2 |

6 |

5 |

6 |

5 |

7 |

12 |

6 |

7 |

7 |

2,4 |

|

Chiron |

9 |

6 |

9 |

5 |

3 |

4 |

11 |

10 |

3 |

7 |

6 |

6 |

2,6 |

When interpreting an individual chart, most astrologers would look for astrological indicators in the chart that matched with that profession. But what astrological factors are important for an art critic? How can they be known without having access to relevant empirical data?

Of course without relevant astrological data nothing special can be said. But astrological speculations can still be done. And the most appealing stories combine fact and fiction found in astrology books. The widely accepted practice among astrologers is to use their imagination to retell the found facts with the help of astrological symbolism. The applied symbols acts like a kind of glue that make their explanation look like astrology, just like the introduction of rhyme and metrics transforms a text into poetry.

Astrologers of course know that they cannot predict with the vague rules of astrological symbolism. Just like poets know that a well sounding poem still belongs to the realm of fiction. It is just their way of of story telling. But when astrologers can successfully frame their story with it astrological myths, it works for them and their clients, like well chosen political slogan can work for a campaign. See: Running for Office? Try These Political Campaign Slogan Ideas by Shane Daley. As politicians and astrologers want to convey a personal message, they are less interested in the facts.

Above we presented some major astrological properties of the category art critics from the ADB. Could they be used to interpret the chart of a person known to be an art critic? Could they predict a successful career in this field? To answer these questions one could do probability calculations on the astrological factors found the ADB group of art critics. Now the question is: Do we see certain patterns in it? And how should they be explained?

When interpreting ADB samples, including our ADB categories, astrologers and statisticians have different premises and points of views. What statisticians see as just another ADB sample probably showing the expected random values and bias, are for astrologers a group of unique individuals, whose essential individual properties one for one could linked to astrology.

Of course, not everything can be explained astrologically: Gender or homosexuality for instance cannot be read from a chart, but quite a lot could be explained when studying and combining enough astrological details, like We have a Sun in Taurus, having Lord 2 in 10, Venus in 9 etc. For this reason astrologers prefer to focus on individual charts, not on plain statistics that tend to blur the unique manifestation of each individual being or event. And here they have a point. Medical doctors and psychologists actually do the same.

See: The wisdom hierachy: A simple introduction to reasonable thinking.

Nevertheless, astrologers also deal with groups and risks, when they assume that people with a prominence of planets in Aries tend to be more aggressive. When astrologers see an aggressive individual having many planets in Aries, they would for sure recall that generalisation found in astrology books and see it as a case for astrology. And when not, another explanation had to be found. Empirical facts based on groups still do matter, and having a quick glance at the ADB category aggressive / brash could be wise.

But what is a “good enough” quick glance at this ADB category? Astrologers supposing that chance does not exist, systematically ignore the role that randomness plays in natural variability. For them, all they encounter is a kind of magic they like to play with it. Thus, the idea that getting 12 Taureans and only 2 Aquarians under 70 art critics could be due to some sampling error, would not impress them. As they dealt with real cases, not with statistical concepts. But a statistician could simply ask: What would happen if we had studied 790 instead of 79 cases of art critics? Would we still see those impressive patterns in the ADB? And a philosopher could ask: Are your astrological ideas also not concepts? Astrological concepts you project onto horoscopes, like tasseologists see them in the layout of tea leaves?

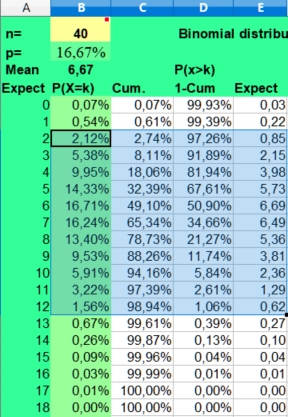

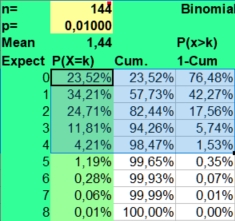

Indeed,

our ADB observations could be the result of a well known bias that

has nothing to do with astrology as the study of significant

coincident patterns in the sky. When we throw 40 times a dice, we

will also see a large difference between the highest and lowest

value. Frequencies ranging from 2 to 12 would not be that unusual as

the Binomial distribution on the left predicts. Only the values 0, 1

and 13 or larger would be expected in less than 5% of cases.

Indeed,

our ADB observations could be the result of a well known bias that

has nothing to do with astrology as the study of significant

coincident patterns in the sky. When we throw 40 times a dice, we

will also see a large difference between the highest and lowest

value. Frequencies ranging from 2 to 12 would not be that unusual as

the Binomial distribution on the left predicts. Only the values 0, 1

and 13 or larger would be expected in less than 5% of cases.

Should we assume a false dice? Metaphysical factors? Jupiter in action? No, certainly not. It was just the sampling error. Some more throws are needed to infer that the dice is false or not. Only a fool would return to the shop to complain about a false dice after only forty trials with a dice.

For centuries layman, poets, theologians, mathematicians, polymaths, and astrologers speculated about risks and causality. They wrote books about the risks involved with eclipses, throwing a dice, offending the gods or having a bad or lucky day. And they also became interested in numbers. Is fasting 40 days enough to see something more? Numbers and relations between numbers (aspects) got significance.

In short: If you do this or see that, follows some another event after that quite more often? And when not, which other factors could be involved? That are still the major questions of all human and scientific thinking. But the ways to treat those questions differ a lot.

Today statistical tests are preferred to exclude coincidence, but few astrologers and even most scientists do not know that the first empirical definition of probability by Laplace, being the number of found cases divided by the number of possible cases, was not earlier formulated than in 1814. We now take his formula for granted, but before that risks dealt with deities. Fortune telling was seen as the work of special heavenly messengers and speculating about it was only the privilege of abstract thinking mathematicians and theologians. So, astrologers could ignore probability calculations for centuries. Astrologers saw the seven planets as gods ruling particular domains and were happy to be able to explain their world with it.

Actually, the confidence intervals involved with possible astrological effects were only calculated in the second halve of the 20th century. But since then the gap between the not using confidence intervals astrologers and scientists became huge. Applied scientists like medical doctors, sociologists and psychologists could profit enormously from statistical testing, but astrologers refused to see its benefits. See also: AstroWiki: Statistics: Continuing Controversy.

The by astrologers ignored rules of chance are just hidden in plain sight. As they always happen. But the same could be said of unusual coincidences. They also do happen. Only really blind persons could not see them. Astrologers expecting to encounter a meaningful cosmos instead of the usual chaos, prefer to link special life events with particular astrological events in the sky. They see any coincidence of them as an opportunity to explain their magical world to others, in which similar things seem to happen: As above, so below. But how could their found cases for astrology convince others? How could we reasonably check their assumptions?

Normally a theory is tested by its correct predictions. But the major problem with the unstructured approach of astrologers is that they can not (yet) predict with it. Using a myriad of vague, illogical and often contradictory rules, astrologers can explain almost everything, but when it comes to predicting facts one can not rely on fuzzy thinking. So, it is no coincidence that skilful astrologers showed up to be bad predictors in experimental tests.

In Sir Karl Popper' s vision the art of science is not just to explain events afterwards, but to predict them before they happened using the same strict empirical rules. For this reason Popper criticised the qualitative Marxist and Freudian theories as not being scientific. They could explain events afterwards, but could not be tested in practice. It is okay to conjecture a theory on the basis of historical events, but the theory should also be refutable. Scientific theories should be specific. Vague terms and concepts should be avoided.

Most scientists would agree with Popper that established facts do not need to be explained. And certainly not by unpredictive ad hoc methods used by astrologers, for the simple reason that you cannot explain things afterwards that you could not reasonably foresee. In their opinion, instead of providing hindsight wisdom that could lead to better attempts in the future, astrology as a pseudo-science would contribute to more Maya. Just like theologians did, when they explained human misery as God's punishment for personal sins.

Views that have little predictive value could be simply replied with a Is that so? or So what? When astrologers notice that you have a Pluto transit, you might accept that pseudo-astronomical reality with: So what? But it might affect you when you believed in astrology. Then the thinking error might refer to your attachment to astrology and your belief that a Pluto transit could influence your soul or ego, just like God is supposed to punish the sinners. In those situations of biased thinking placebo or nocebo effects could flourish just by suggestion. But that would still not be any proof of astrology or eternal justice being at at work. It would more likely be an instance of man's search for meaning, that science cannot provide.

Only when the same pattern repeatedly popped up much more than expected under believers and not believers, then there is a trend that needs further investigation. But we cannot rely on incidental observations and ad hoc rules, that sometimes confirm this principle, another time that rule, but in the long term would only give us false hope .

The immediately experienced unpredictability of many stochastic events contribute to the fun with gambling. This with the possibility of immediate gratification make dice games so addictive. This play with fortune will appeal even more to us when our life is rather dumb and boring. Do I become rich tonight in the casino with one more throw? Does Jupiter stands tonight favourable? That is the big roulette question. It could be the decisive moment people for years waited for.

This could be a good reason for you to watch your stars and numerology today. As chance does not exist according to their proponents. But there is also an inherent predictability when working with large numbers, where the business models of bookmakers and insurance companies rely on. They use statisticians to fill in this gap of uncertainty between immediate risks and long term effects by carefully estimating the risks of events that they could observe. They have a wait and see approach to by people shared suppositions without statistical proof. It would not influence their business as usual, unless this deviation from the found mean had some immediate marketing advantage for them.

But assuming that astrology can deal with this uncertainty for you seems rather foolish. Although some astrologers believed that they could explain the faith of lottery winners, they never became rich. Whatever method used, personal experience or scientific research, one can seldom predict new events with hindsight wisdom. That makes your life an empirical challenge.

But who is right? The individual gambler following his gut feeling in the here and now? Or statisticians and scientists dealing only with long term follow-up group behaviour? Actually, once established habits and presumptions predict most of the times the flow that people take. Even if it leads them probably in the wrong direction. How to predict this empirically found fact?

The practical problem is that we seldom have time and opportunity to do the necessary research. But we do have access to many opinions. The scientific approach can be boring, painstaking and will often be unavailable to most of us. And the scientific studies done might be irrelevant for the questions we are faced with. But we do have access to many opinions: On facebook, twitter ad the last breaking news.

Does this bombardment of news make you any wiser? No, as there is seldom a valid reason to assume that one point of view should win the contest. It could be more beneficial to accept the coexistence of different realms of reality that might enough complement each other. But that needs a lot training, as most born with views on the world, do differ a lot.

Statisticians do their claims on groups, never on individuals. But astrologers, psychologists and medical professionals deal with individuals. How could this work ever be done? It any rational prediction or explanation of the found facts possible, when their clients are always members of many astrological, psychological and medical categories at the same time? Their clients would thus to a certain extent follow a particular group rule, but few would be a typical exponent of that particular group, because the individual persons also belonged to quite different other groups and subcategorises.

One should not that easily generalise, but denying the existence of group effects would also be a mistake. As without clearly defined group effects, no sound medical or astrology book could ever be written. If all charts were unique and thus incomparable, there could not be any astro-logy at all. So, both medical doctors and astrologers should benefit from statistics comparing large groups. Well-designed prospective research on large groups is the cornerstone of evidence based medicine, on which most medical progress rest. Denying or neglecting its results could be a reason to suspend or ban a medical doctor. But in the world of astrology, the statistical empirical methods are met with suspicion if not with open hostility. But this has not been always been the case as the following history will tell you.

Between fun with dices and the law of the large numbers, there is a lot of room for speculation, the polymath Gerolamo Cardano found out (Wikipedia):

A logical and mathematical approach to the seven planets of astrology in the time of Cardano, is to assume that every planet has an equal risk of being placed in one of the twelve signs and houses. Then the risk of having Sun in sign or house could be calculated as 1/12. The risk of not falling into that sign would be calculated as 11/12. This is the binomial or p or p-1 approach of the usual this or that pertains kind of logical thinking.

When we found that plumbers had many more than expected (1/12) planets in scorpio, that would be called an astrological effect. See: The plumbers story .

But the problem is that we do not know the exact probability of any event in any group. So, assuming p is 1/12 seems to be a logical approach to deal with the astrological reality, but in empirical practice it is seldom correct. It is only a simple first best guess when dealing with fast moving personal planets in signs and houses. And it enables us to calculate the sampling error, which is important because it predicts which other values could be expected. From this we can estimate confidence intervals.

Actually, the risk involved with the houses is seldom 1/12, as all house systems have slow and fast rising signs. The inner planets Mercury and Venus do not move independently from the Sun, as they will always be close to the Sun. Statistical evaluation done with a control group could correct for many kinds of systemic thinking errors, but not for all. In this article we will show you several approaches.

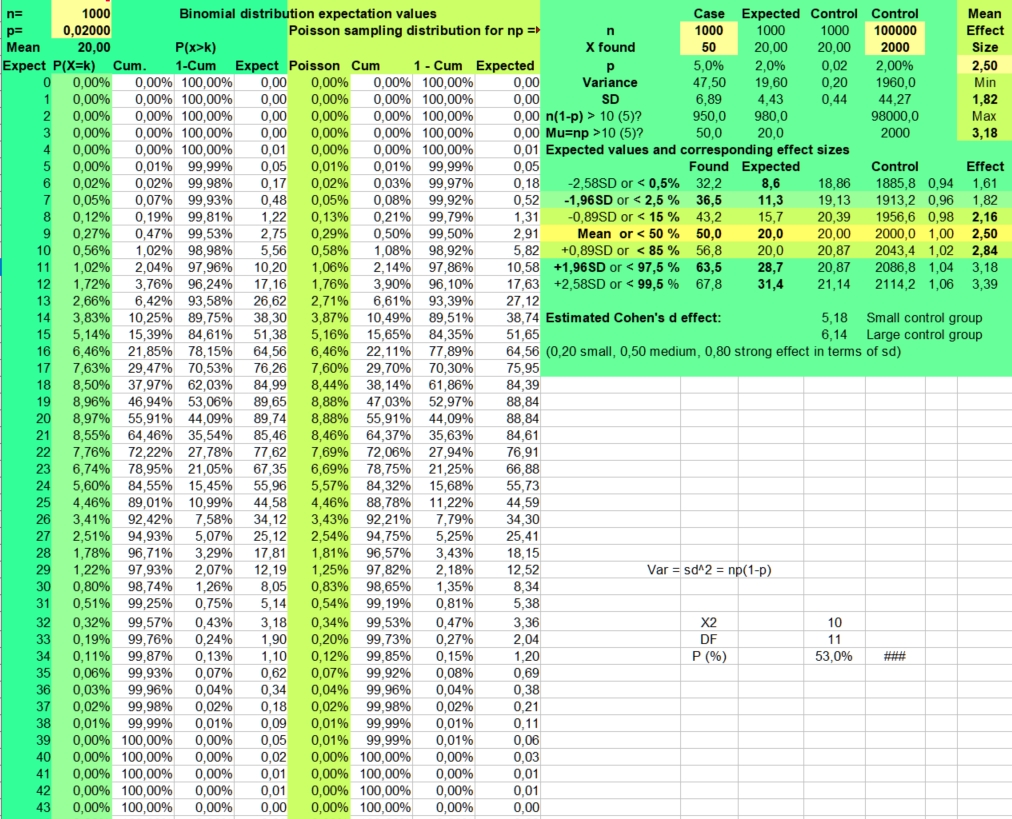

Binomial

calculator

Binomial

calculatorYou can download the spreadsheet which is used here as: Binomial_distribution_for_astrology.xlsx or Binomial_distribution_for_astrology.ods.

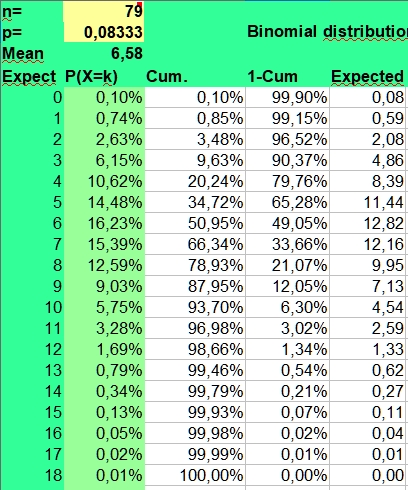

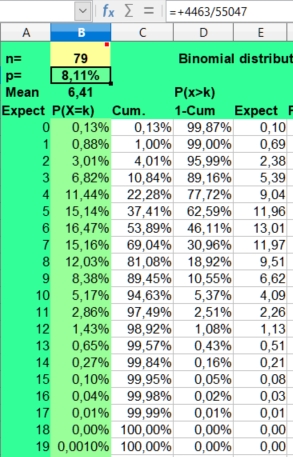

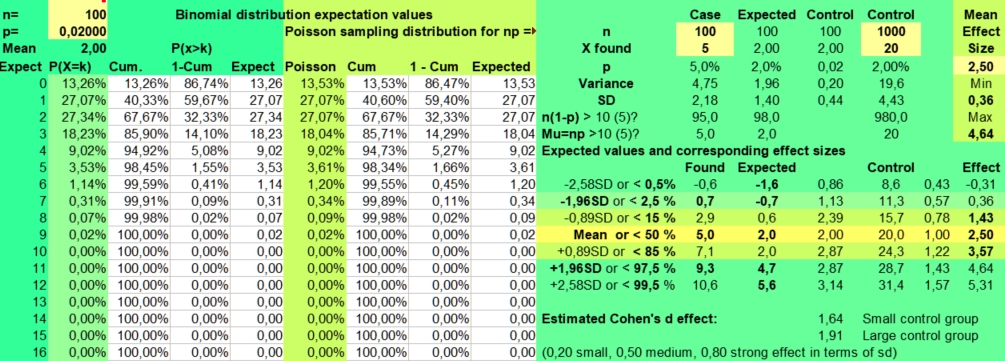

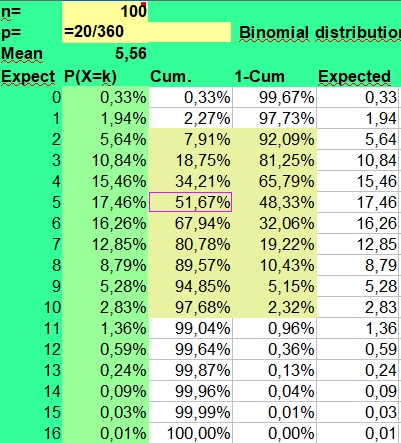

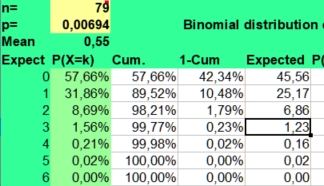

The binomial calculator used calculates the average frequency (mean) and the risks involved (P(X=k)) for found frequencies when throwing n times coins (p=1/2), dices (p=1/6) or doing astrological experiments with an assumed probability p = 1/12.

The risk of a hit is p and the risk of a miss is 1-p. This makes the distribution binomial, as only two possibilities (hit or miss) are investigated. A hit could be throwing a six with a dice (p = 1/6) or getting a Moon in Aries (p = 1/12), when investigating astrology. The risk of getting a frequency 0 to n hits for a particular side with n throws (experiments, cases) is displayed under the column P(X=k).

Under n (number) you fill in the number of investigated cases (here 79) and under p you fill in +1/12 to get the probability. The mean value is calculated as n*p or 79*1/12 = 6,58. But as the binomial distribution deals with discrete numbers, the values 6, 7 and 5 have the highest scores. Because of the asymmetry (skewness) of the distribution, 6 is more probable than the rounded mean 7 of 6,58. But as in the normal distributions, most values will fluctuate around the mean. Here values of 4 through 8 are found most often. They have a P(X=k)> 10% in the second column.

The spreadsheet also calculates Cumulative risks of getting a value of k or lower (third column) and the reverse risk 1- Cum of not getting a value of k or lower (fourth column), thus the risk of X > k (fourth column).

As you can see the risk of getting zero hits P(X = 0) for Sun in Aries with 79 throws of a fair 12 sided dice is only 0,10344 percent ((11/12)^79). Finding a frequency of zero cases for Sun in Aries is thus an unlikely event.

The expected value np of getting zero hits with 79 throws is 0,08 (79 times 0,0010), so when we would repeat this binomial experiment (n is 79, p = 1/12) a 100 times with fresh samples of 79, we could on average expect 8 cases of Sun in Aries having zero hits. Of course these 8 cases would be nothing compared to the most expected frequencies from 5 through 7 that would be seen more than a thousand times (100*12 is 1200).

But a point to remember is made. It is discussed under Data mining. When doing multiple observations, some unlikely findings are to be expected. In a crowded gambling hall there are always some excited winners. And that happy few could draw more attention than the expected majority of usual losers. Obviously this could lead to a false impression of what is going on or what statisticians call bias.

A low frequency of k = 2 has a binomial risk of 2,63%. Two cases could be expected, with a sample size of 79. So only the lower frequencies of 0 (0,10%) and 1 (0,74%) are within the exceptional 2,5% lower range of this binomial distribution.

The risk of getting the high frequency of k = 11 is 3,28%, which clearly falls outside the critical 2,5% upper range of a two-sided test. The risk of 12 is 1,69%, but values of 12 and higher are still found in 3,02% of cases. A frequency of 12 could be called borderline higher than expected, but not exceptionally high, as only frequencies above 12 are unlikely (1,34%) when applying common rules. Values from 2 through 12 could be expected in 98,66 - 0,85 = 97,81% of cases and values from 2 through 11 in 96,98 - 0,85 = 96,13% of cases. This is what we can expect assuming a risk of 1/12 and a sample size of 79.

Getting frequencies as low as of 2 or as high as 12, when 6 or 7 cases were expected, could hint to potential large effect sizes. But an astrological effect of a planet in sign or house must still be stronger, to reach statistical significance at the 5% level with 79 cases. As statistical significance depends strongly on sample size, much larger groups should be studied to see significant differences that could help us to come to conclusions. For this reason the statistician would ask, what if we studied 790 instead of 79 art critics?

In our study doing multiple measurements for planet in sign and house, every row yielding 12 values with a sum of 79 could wrongly be considered as containing 12 independent binomial tests with n is 79, p = 1/12. So, the measure of the 7 personal planets in sign, would yield 7 times 12 is 84 independent binomial tests. We might thus expect some 6 or 7 cases (84*0,10344) with a frequency of 0, but we did not encounter any zero at all.

For slow planets in sign zero frequencies were found and expected, but why did we not see them in the 144 measurements of planet in house? The reason is that we do not deal with a fair 12 sided dice , when dividing 79 possible hits over 12 signs and houses. Our situation is that of a sampling experiment without replacement. In that case the found frequencies per sign or house are not independent, as the sum of all values must equal the sample size. And then we may not use the multiplication rule of independent risks.

If you throw a fair dice, you will always get the same risk. New scores do not depend on previous ones. This is the situation of independent risks, the binomial distribution deals with. But when drawing lots, or when dividing 79 planets over 12 signs or houses, the calculations become much more complex as the possibilities of a new draw or assignment depend on the results of earlier throws.

Suppose that Sun in Aries had 19 hits against 79/12 (6,58) expected. This event with an effect size of 2,9 is very unlikely as P(x=19) = 0,0015% or 1 in 66894,3 cases and P(x>18) is 1 in 49048,7 cases. Then the expected value for having Sun in Taurus or another sign would be 60/11 (5,45). So the risk of getting another high score for the sun in another position would be much lower than the binomial distribution would have predicted. In this way there would be a kind of regression to the mean.

The same happens with the lower scores. If we had zero cases of Sun in Aries, the risk getting another low value in another sign would be very much decreased. As we now had to divide the 79 remaining hits over 11 instead of 12 signs. The expected value would be 79/11 (7,18) instead of 79/12 (6,58) of the binomial, which is 12/11 or 9,1 % higher.

So compared to the binomial distribution, when doing sampling experiments without replacement, clusters of extremely high or low values are not to be expected. High and low values are kept in balance as the sums of each row must be equal to the sample size.

Is that a major problem? Should we refrain from using the binomial distribution when calculating confidence intervals? No. The binomial distribution is still a great tool to predict the usual to be expected probabilities as the inaccuracies we speak of only happen with relatively small samples having extreme values (Outliers), like that of the ADB distribution of slow planets in sign. But here we often deal with special cases which cannot be explained with variance..

When dealing with larger samples, having smaller confidence intervals for the effect size, the calculated risks of the binomial distribution do in practice very well predict the actual values one could encounter. And the larger deviations only happen when the found values do differ a lot of the mean.

The risk of getting zero cases of Sun in Aries with a sample size of 790 would be extremely low, so low that you would not see it in practice (p = 1,40 E-30). But a similar unlikely event as getting P(X = 0) is 0,10344 % with 79 throws (Expected value is 0,08 or in practice most of the times zero) with a fair 12 sided dice is getting P (x=45) is 0,11% with a sample size of 790 (Expected value is 0,88, thus 1 case out of 790 expected). But here the error because of our sampling experiment without replacement would be less marked, as after getting an extremely low value of 45, the next 790 - 45 = 745 throws had to be divided over 11 instead of 12 signs. The expected value would be 745/11 (67,2) instead of the mean value 790/12 (65,8), which is only 2,8% higher. And that is a small deviation compared to the confidence interval of that estimate (50 - 81).

For statisticians regression to the mean refers to the empirical rule that the larger the sample taken from a population, the more closer the measured properties of that sample resemble the actual values of the studied population. But astrologers interpret statistical and empirical rules in an alternative way.

When Gauquelin's work on the Mars effect of medical doctors was redone with larger groups by a Belgian Committee, the found differences became less outspoken. Statisticians explained this as regression toward the mean. They concluded that Gauquelin's finding rested at least partly on the sampling error, that could be corrected for by taking larger samples. And when the effect size would be smaller with a large sample, there could be as well no effect at all.

Astrologers explained the less impressive results of the Belgian Committee by claiming that only Gauquelin's from the Who is who books selected professionals were iconic enough. The by the Belgians added not that famous medical doctors were just less special and thus would have a smaller Mars effect. So there would be an astrologically explainable reversion to mediocrity: Only the most celebrated types selected by Gauquelin would fully express the Mars effect and when adding many more mediocre professionals to the study group, their combined Mars and Jupiter effects would be less strong.

From the point of view of astrologers chance does not exist. At least not in the unique horoscopes they studied. So if a top ten of the greatest painters of the world would show certain patterns in their horoscopes, they would be regarded as typical of a genial painter. No control group and study of variation were needed, because there study group was unique. And when others did not see that pattern in the ADB category fine art artist (n = 1902), astrologers could simply explain away the discrepancy by stating that most of them were not that genial.

A statistician would say: You only studied 10 cases, while you should know that there are 12 signs for each planet! Imagine what would happen if we tested a dice with only 5 throws. You would at least miss one facet! And in your top 10 at least two and probably more possibilities are missed as P(x=0) is 41.9 % for p =1/12 and n=10 having an expected frequency of 3,49. This sample cannot be representative!

But the astrologer could majestically reply: We did not test a dice. Our selection was not random. We studied the top 10 painters and only them. So, the planets in sign we missed could not be typical of a genial artist. You can reproduce our experiment if you want with our selection. Then you would see that in our practice chance does not exist.

The unspoken premise and conclusion is that chance does not exist when we deal with unique events or samples. But applying this argumentation scheme to an indeed always changing reality is also a circle argument that cannot lead to any progress in astrology. For any astrology to be taken seriously, effect sizes do matter. And you cannot reasonably estimate and evaluate effect sizes and their confidence intervals without having some basic knowledge of statistics.

When an effective medical treatment against placebo is tested, we would expect more clear differences in effect size, the larger the sample size. Thus, with a larger sample size the initial effect size of 2,0 (+/- 0,5), could become 1,9 (+/- 0,2), but not 1,1 (+/- 0,2). See: The calculation of the effectiveness of medication.

In our opinion this methodology should also apply to presumed astrological effects. See: The relative risk of having an accident during a Mars conjunct Ascendant transit . When we compared small and large ADB categories with the ADB as a whole, the based on the sampling error regression to the mean rule clearly applies. The larger the categories, the smaller the effect sizes we encountered. And most statistically significant effect sizes were so small to predict with.

When studying many large tables as the ADB research group did (data-mining), there is similar sampling problem. If we do 100 tests with a significance level of 5%, on average five of them will just be significant by chance. They could be called false positive, as they suggest that something unusual is found, when actually nothing special happened.

If we only published the significant test results - suppose some 15 were found - there would be a publication bias in which on average 5 of the 15 test results could just be accidental findings. But the problem is that we can not reasonably know which results are due to chance and which are not.

Actually

every observation will contain both elements, statistical noise and

real trends. So the variation caused by sampling errors can

hide small, but relevant effects, or exaggerate minimal trends, just

by chance. For this reason many more unbiased studies have to

be done and combined to see the real patterns, as just by

chance findings are unlikely to be repeated again. As only real

effects and systematic forms of bias do keep their patterns in time.

But biased observations do the same.

Actually

every observation will contain both elements, statistical noise and

real trends. So the variation caused by sampling errors can

hide small, but relevant effects, or exaggerate minimal trends, just

by chance. For this reason many more unbiased studies have to

be done and combined to see the real patterns, as just by

chance findings are unlikely to be repeated again. As only real

effects and systematic forms of bias do keep their patterns in time.

But biased observations do the same.

Is that error a reason to distrust statistics as many astrologers do? No. Professional astrologers also study and compare a lot of charts. But statisticians are aware of the statistical problems, estimate confidence intervals and can correct for multiple tests by decreasing the alpha.

So instead of stating in my experience plumbers have Mercury in Scorpio, one could better provide a more factual summary of the found data by stating: In one study of 12 plumbers, four of them had Mercury in Scorpio (p> 3 is = 0,014). See: The plumber's story for more details.

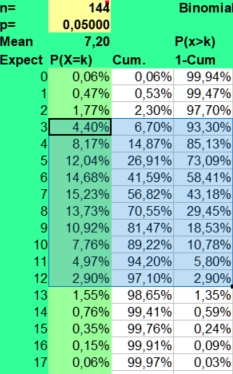

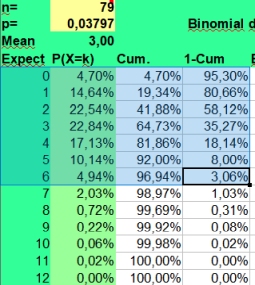

In our study 12x12 contingency tables with 144 found values are presented. With an alpha of 0,05 and 144 values, on average 7 extreme values per table could be found just by chance. If you fill in the binomial distribution with p = alpha and n= 144, you could see in the picture on the left that getting 3 up to 12 significantly lower or higher values per table would be quite normal (expected in 95% of the cases). And 13 would be borderline high value, as the risk of P>12 is 2,90%.

For

this reason most statisticians advise us to use a smaller alpha when

many values are compared. If we screened for unusual findings in a

12x12 table with an alpha of 0,01, much less false positives would be

found (mean 1, values above 4 being unlikely with a risk of 1,53%).

See the picture on the right.

For

this reason most statisticians advise us to use a smaller alpha when

many values are compared. If we screened for unusual findings in a

12x12 table with an alpha of 0,01, much less false positives would be

found (mean 1, values above 4 being unlikely with a risk of 1,53%).

See the picture on the right.

This statistical fact would be a good reason to use the mean +/- 2,58 sd values of the normal distribution in the binomial calculator as well. They give an indication of the to be expected 99% confidence interval. This approach yields much less statistical noise. When an astrological parameter is really predictive when interpreting charts, it should also pop up as being statically significant with a much smaller alpha.

Statisticians speak of the type 1 error when a true Null hypothesis is wrongly rejected. As we saw, the risk of this error depend on the chosen alpha. The larger the alpha, the larger the risk on false positives.

When doing one large and expensive experimental study testing the effectiveness of a medication against placebo, an alpha of 5% is quite acceptable. Because the reverse situation of rejecting a likely to be effective medicine because of lack of statistical significance (type 2 error) should be avoided. For this reason the required sample size to statistically proof the claimed effect size is calculated before the experiment is done.

But our study is not a well designed study to statistically proof any before the study started clearly expressed claim. We did not compare one particular study group with one well designed control group to watch the results (prospective study), but we compared all the ADB categories with the found values in the ADB as a whole (retrospective study). We studied ADB categories as they were found and compared them to the ADB as a whole (convenience sampling). In this case of doing hundreds of comparisons of found values against expected means, only extremely unlikely values should be regarded as significant. Would it be a problem that the alpha of 1 % would give many false negatives? No, and certainly not for predictive astrology as only large effect sizes could be predictive for that purpose

We described the statistical methods used to evaluate the data. And we invite you to try our methods out yourself using free software. Our simple approach was just to publish all of the in the ADB available data, if they fit current astrological opinions or not. But to evaluate them statistical methods are needed.

Quite a lot of astrological claims could be rejected with the found facts, thus with the ADB being statistically evaluated. But that was not our intention. We were just interested in the found facts. And we used statistical techniques to answer the relevant questions that any student of astrology would ask when being confronted many horoscopes. Could we predict with it? Could astrologers predict with it? And how well?

As a control group we used the ADB version exported on November, 28 2018 ( adb_export_181128_2309), our selection of art critics also came from . The statistical advantage is that we compared samples of the same population. The category art critics is just a subset of the ADB, so it should have similar statistical properties as the ADB as a whole. If the ADB is more or less normally distributed, its selected categories should be normally distributed as well. And that allows us to do statistical tests on found and expected values.

The inclusion criteria were having 1) a Rodden rating of AA, A, B or C for the birth data and 2) the entry should refer to the birth chart of a person.

This control group of 55047 ADB data of persons enabled us to calculate the expected planet positions using the formula X expected is 79/55047 times X observed in the control group.

The observed frequencies in the control group were:

|

Aries |

Taurus |

Gemini |

Cancer |

Leo |

Virgo |

Libra |

Scorpio |

Sag |

Cap |

Aqua |

Pisces |

Total |

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

Sun |

4738 |

4771 |

4772 |

4702 |

4731 |

4511 |

4522 |

4259 |

4194 |

4449 |

4601 |

4797 |

55047 |

|

Moon |

4673 |

4521 |

4652 |

4483 |

4618 |

4505 |

4538 |

4567 |

4576 |

4618 |

4652 |

4644 |

55047 |

|

Mercury |

4432 |

4299 |

4194 |

4184 |

4409 |

4584 |

4717 |

4823 |

4830 |

4828 |

4916 |

4831 |

55047 |

|

Venus |

4906 |

4787 |

4548 |

5134 |

4103 |

5243 |

3884 |

4579 |

4439 |

3890 |

5147 |

4387 |

55047 |

|

Mars |

3983 |

4332 |

4871 |

5128 |

5497 |

5489 |

5192 |

4802 |

4319 |

3912 |

3779 |

3743 |

55047 |

|

Jupiter |

4136 |

4224 |

4140 |

4597 |

4786 |

5150 |

5229 |

5146 |

4833 |

4441 |

4173 |

4192 |

55047 |

|

Saturn |

4125 |

4220 |

4249 |

4053 |

4559 |

4745 |

4836 |

4912 |

5139 |

5023 |

4822 |

4364 |

55047 |

|

Uranus |

5178 |

4924 |

6210 |

5130 |

4331 |

4187 |

4100 |

3673 |

3884 |

4159 |

3987 |

5284 |

55047 |

|

Neptune |

1854 |

2938 |

3608 |

5166 |

7319 |

8227 |

9330 |

6504 |

4489 |

2695 |

1495 |

1422 |

55047 |

|

Pluto |

1871 |

4484 |

9025 |

13318 |

12163 |

6223 |

3279 |

1949 |

778 |

462 |

342 |

1153 |

55047 |

|

N Node |

4739 |

4663 |

4911 |

4826 |

4615 |

4615 |

4591 |

4365 |

4302 |

4305 |

4487 |

4628 |

55047 |

|

Chiron |

9290 |

7520 |

4691 |

3276 |

2604 |

1884 |

2098 |

2618 |

2915 |

3864 |

6038 |

8249 |

55047 |

|

Aries |

Taurus |

Gemini |

Cancer |

Leo |

Virgo |

Libra |

Scorpio |

Sag |

Cap |

Aqua |

Pisces |

Total |

|

|

Cusp 1 |

2559 |

3199 |

4384 |

5542 |

6059 |

5956 |

6018 |

5838 |

5462 |

4236 |

3235 |

2559 |

55047 |

|

Cusp 2 |

3185 |

3646 |

4535 |

5403 |

5545 |

5363 |

5472 |

5605 |

5172 |

4540 |

3482 |

3099 |

55047 |

|

Cusp 3 |

3650 |

4119 |

4759 |

5192 |

5019 |

4917 |

4755 |

5161 |

5204 |

4599 |

4061 |

3611 |

55047 |

|

Cusp 4 |

4170 |

4468 |

5029 |

4962 |

4634 |

4192 |

4400 |

4495 |

5016 |

4974 |

4517 |

4190 |

55047 |

|

Cusp 5 |

4742 |

4947 |

5141 |

4650 |

4149 |

3788 |

3722 |

4103 |

4784 |

5118 |

5125 |

4778 |

55047 |

|

Cusp 6 |

5323 |

5481 |

5157 |

4581 |

3552 |

3219 |

3176 |

3642 |

4548 |

5407 |

5490 |

5471 |

55047 |

|

Cusp 7 |

6018 |

5838 |

5462 |

4236 |

3235 |

2559 |

2559 |

3199 |

4384 |

5542 |

6059 |

5956 |

55047 |

|

Cusp 8 |

5472 |

5605 |

5172 |

4540 |

3482 |

3099 |

3185 |

3646 |

4535 |

5403 |

5545 |

5363 |

55047 |

|

Cusp 9 |

4755 |

5161 |

5204 |

4599 |

4061 |

3611 |

3650 |

4119 |

4759 |

5192 |

5019 |

4917 |

55047 |

|

Cusp 10 |

4400 |

4495 |

5016 |

4974 |

4517 |

4190 |

4170 |

4468 |

5029 |

4962 |

4634 |

4192 |

55047 |

|

Cusp 11 |

3722 |

4103 |

4784 |

5118 |

5125 |

4778 |

4742 |

4947 |

5141 |

4650 |

4149 |

3788 |

55047 |

|

Cusp 12 |

3176 |

3642 |

4548 |

5407 |

5490 |

5471 |

5323 |

5481 |

5157 |

4581 |

3552 |

3219 |

55047 |

|

H1 |

H2 |

H3 |

H4 |

H5 |

H6 |

H7 |

H8 |

H9 |

H10 |

H11 |

H12 |

Total |

|

|

Sun |

5220 |

4975 |

4643 |

4287 |

4020 |

3961 |

4085 |

4141 |

4197 |

5072 |

5162 |

5284 |

55047 |

|

Moon |

4541 |

4557 |

4628 |

4567 |

4555 |

4554 |

4675 |

4493 |

4569 |

4579 |

4648 |

4681 |

55047 |

|

Mercury |

5187 |

5060 |

4808 |

4370 |

4100 |

4029 |

4053 |

3992 |

4448 |

4757 |

5036 |

5207 |

55047 |

|

Venus |

5124 |

4911 |

4656 |

4273 |

4220 |

4034 |

4080 |

4146 |

4463 |

4827 |

5096 |

5217 |

55047 |

|

Mars |

4732 |

4677 |

4523 |

4355 |

4400 |

4231 |

4454 |

4568 |

4554 |

4825 |

4802 |

4926 |

55047 |

|

Jupiter |

4529 |

4629 |

4657 |

4586 |

4663 |

4589 |

4499 |

4496 |

4538 |

4731 |

4481 |

4649 |

55047 |

|

Saturn |

4696 |

4650 |

4526 |

4680 |

4619 |

4622 |

4633 |

4467 |

4412 |

4481 |

4603 |

4658 |

55047 |

|

Uranus |

4580 |

4421 |

4436 |

4477 |

4561 |

4472 |

4664 |

4632 |

4779 |

4678 |

4776 |

4571 |

55047 |

|

Neptune |

4530 |

4517 |

4463 |

4483 |

4582 |

4496 |

4577 |

4756 |

4794 |

4582 |

4648 |

4619 |

55047 |

|

Pluto |

3871 |

3757 |

3993 |

3951 |

3853 |

3866 |

5217 |

5231 |

5327 |

5273 |

5417 |

5291 |

55047 |

|

N Node |

4520 |

4525 |

4505 |

4536 |

4717 |

4603 |

4528 |

4535 |

4679 |

4603 |

4627 |

4669 |

55047 |

|

Chiron |

4541 |

4553 |

4464 |

4566 |

4656 |

4564 |

4624 |

4538 |

4652 |

4669 |

4579 |

4641 |

55047 |

The

control group is large enough to test some assumptions using the

normal distribution. Are the planets evenly distributed in

sign?

The

control group is large enough to test some assumptions using the

normal distribution. Are the planets evenly distributed in

sign?

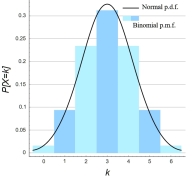

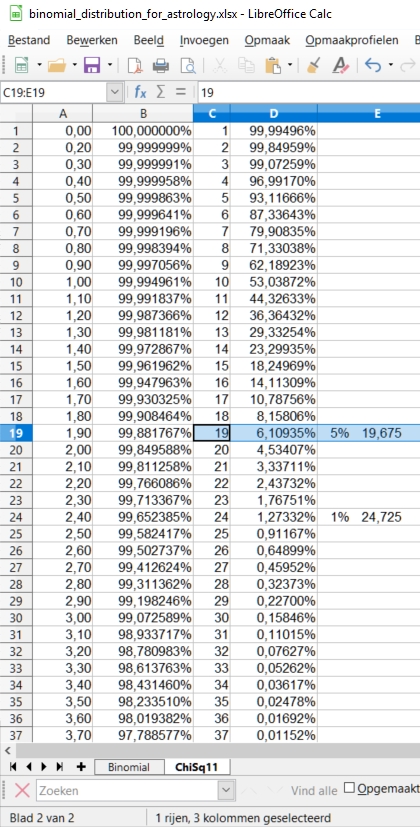

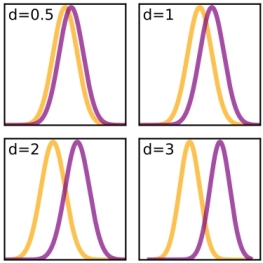

We could make use of the empirical statistical rule, that states that if the sample size is large enough, the shapes of the discrete binomial distribution and the continuous normal distribution will be similar. This will be most apparent with coin tossing (p =0,5), as shown in the picture on the right taken from the Wikipedia, because then we do not see any skewness in the binomial distribution. But when the expected value np > 10 and n(1-p) are larger than 10 the similarity rule applies. Less strict criteria use np > 5.

Translated to the astrological practice with p = 1/12, sample sizes of selected ADB categories should be in the order of 120 (60) or higher to expect roughly a normal distribution for the values of the observed frequencies. But is that enough to show astrological effects in a statistical way?

Let us go on with the interpretation of numbers like statisticians do, and apply the above mathematical considerations to astrological data. If the planets in sign are evenly distributed, then the found frequencies should be near the expected value np (mean) in terms of standard deviations. The standard deviation can be estimated as the square root of variance np(1-p) of the binomial distribution when np is large enough (preferably > 10, but > 5 might do).

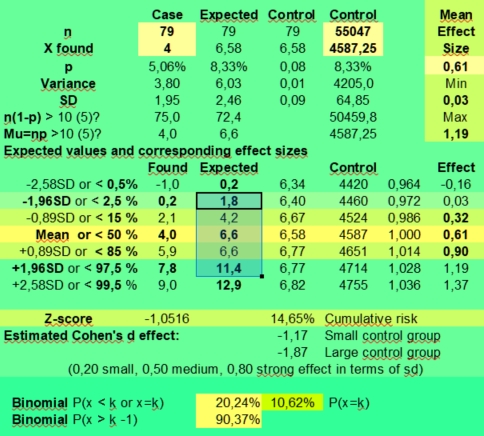

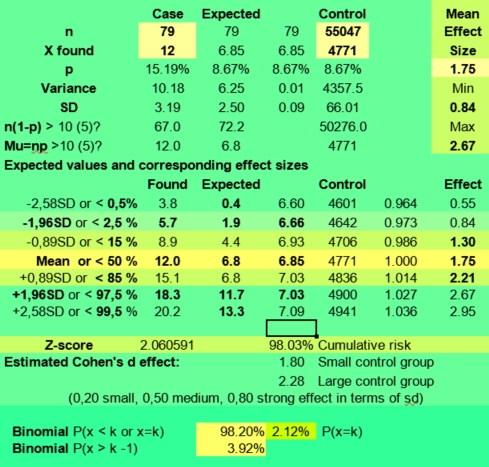

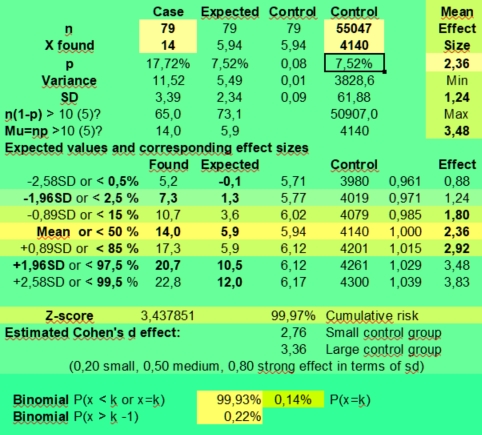

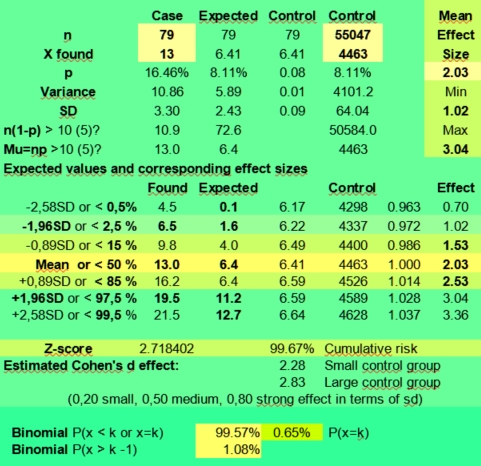

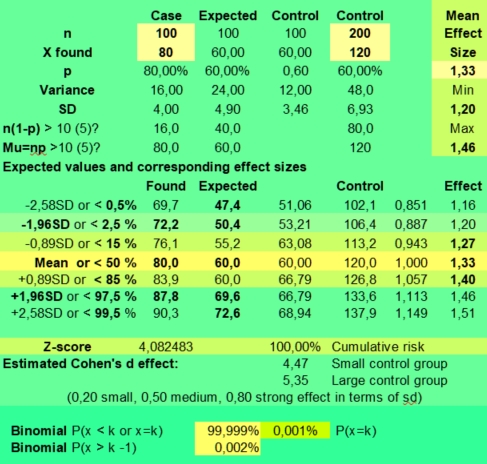

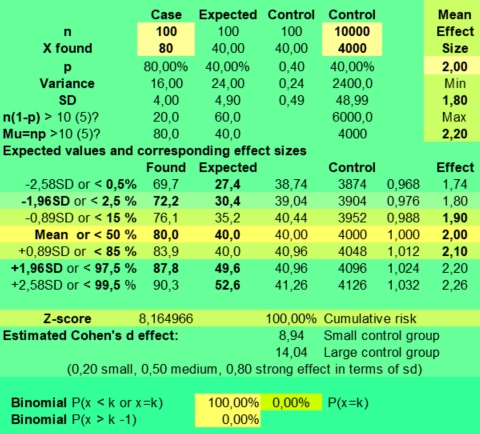

The spreadsheet which we use to calculate these expected frequencies is again : Binomial_distribution_for_astrology.xlsx or Binomial_distribution_for_astrology.ods. On the right it contains a simple calculator where you can fill in the numbers found and expected for the case and control groups and their sample sizes (yellow area). Then it will calculate the expected probability p for the case and control groups, the variance and standard deviations, some parameters like n(1-p) and np and most important for our purpose the Expected frequencies and corresponding effect sizes.

When

the control groups consists of n = 55047 persons, then the mean value

with p is 1/12 is 4587,25 and the expected standard deviation would

be 64,8. See the upper values in 5th column.

When

the control groups consists of n = 55047 persons, then the mean value

with p is 1/12 is 4587,25 and the expected standard deviation would

be 64,8. See the upper values in 5th column.

According to statisticians 70 % of the values should fall in the np +/- 0,89 SD range of 4524 - 4651 and circa 95% of the values in the np +/- 1,96 SD range of 4460 - 4714.

In the large control group, some 70 % of observed frequencies would vary only 1,4 % around the mean (effect sizes of 0,986 - 1,014) and some 95% of expected frequencies would vary at most 2,8 % around the mean (effect sizes of 0,972 - 1,028).

So tiny effect sizes of +/ - 2,9 % or more could be statistical significant with a sample size of 55047, p is 1/12 and an alpha of 0,05. If you would use an alpha of 0,01 effect sizes of 3,7 % or larger were needed (sixth lower column gives a range of 0,964 - 1,036).

But a found effect size of 0,61 (39 % less) with k is 4 against 6,58 expected for the small case group with n is 79, has a wide range of not significant effect sizes if the sampling error is taken into account: 0,03 - 1,19 (upper right in the calculator). Only found values of 0, 1 and 12 and higher would fall outside the expected 95% confidence interval of 1,8 - 11,4 (third column) of values that in 95% of samples just could have been arisen by chance.

And if we would use an alpha of 1 % to compensate for our data mining, only values above 12,9 having an effect size of 1,83 or more would be significant, and the rest just could be discarded as being a likely result of the sampling error.

When you would fill in X found is 40 with n is 790, the range of estimated effect sizes would still be 0,42 - 0,79 (95 %) with an expected value of 65,8 (50,6 - 81,1). With X is 120 and n is 790 as in the Sun in Taurus case, the effect size range is 1,52 - 2,12 (95 %), but with k is 12 and n =79 having Sun in taurus could have both positive or negative effects with an expected effect size range from 0,87 to 2,77. It is actually 0,84 - 2,67 when using the found value 4771 for Sun in Taurus of the control group instead of the expected value of +55047/12.

So you see that both the exact approach using the binomial calculator as the normal distribution approach for this problem produce similar results. In both cases we had to conclude that a value of 12 found against 6,58 expected (mean effect size 1,75) was not significant enough to provide evidence of a positive effect given the small sample size of 79. It could be a real positive effect that would be repeated again when doing more research, but this assumption still has to be proven.

Is the ADB evenly distributed? No, certainly not. This is most evident for slow planets in sign, but it also applies to the personal planets with the exception of the fast moving moon. The found frequencies deviate more from the mean than we expected. With p is 1/12 and n is 55047 we expect values that deviated only ± 1% (± 1sd) from the mean in 70% of cases or ± 3% (± 2 sd) from the mean in 95% of cases, but as you can see the lowest frequency of 4194 for Sun in Sagittarius in the control group is 91% of the expected value. The highest value for sun sign Pisces (4797) is 105% of the mean. The actual standard deviations found in the rows are shown in the last column in the table below.

|

Aries |

Taurus |

Gemini |

Cancer |

Leo |

Virgo |

Libra |

Scorpio |

Sag |

Cap |

Aqua |

Pisces |

SD |

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

Sun |

4738 |

4771 |

4772 |

4702 |

4731 |

4511 |

4522 |

4259 |

4194 |

4449 |

4601 |

4797 |

195,8 |

|

Moon |

4673 |

4521 |

4652 |

4483 |

4618 |

4505 |

4538 |

4567 |

4576 |

4618 |

4652 |

4644 |

61,8 |

|

Mercury |

4432 |

4299 |

4194 |

4184 |

4409 |

4584 |

4717 |

4823 |

4830 |

4828 |

4916 |

4831 |

260,3 |

|

Venus |

4906 |

4787 |

4548 |

5134 |

4103 |

5243 |

3884 |

4579 |

4439 |

3890 |

5147 |

4387 |

453,0 |

|

Mars |

3983 |

4332 |

4871 |

5128 |

5497 |

5489 |

5192 |

4802 |

4319 |

3912 |

3779 |

3743 |

629,1 |

|

Jupiter |

4136 |

4224 |

4140 |

4597 |

4786 |

5150 |

5229 |

5146 |

4833 |

4441 |

4173 |

4192 |

410,8 |

|

Saturn |

4125 |

4220 |

4249 |

4053 |

4559 |

4745 |

4836 |

4912 |

5139 |

5023 |

4822 |

4364 |

358,3 |

|

Uranus |

5178 |

4924 |

6210 |

5130 |

4331 |

4187 |

4100 |

3673 |

3884 |

4159 |

3987 |

5284 |

719,6 |

|

Neptune |

1854 |

2938 |

3608 |

5166 |

7319 |

8227 |

9330 |

6504 |

4489 |

2695 |

1495 |

1422 |

2606,6 |

|

Pluto |

1871 |

4484 |

9025 |

13318 |

12163 |

6223 |

3279 |

1949 |

778 |

462 |

342 |

1153 |

4410,1 |

|

N Node |

4739 |

4663 |

4911 |

4826 |

4615 |

4615 |

4591 |

4365 |

4302 |

4305 |

4487 |

4628 |

185,8 |

|

Chiron |

9290 |

7520 |

4691 |

3276 |

2604 |

1884 |

2098 |

2618 |

2915 |

3864 |

6038 |

8249 |

2459,6 |

Clearly, the planets in the large ADB control group are not evenly distributed in sign. The slower the planet as seen from the earth is moving, the less even is its distribution in the ADB. And the higher the standard deviations become.

If we express the found values in terms of expected standard deviations away from the mean, thus (x-np) / sd or (x-55047/12) / 64,85, we see again that only the values of the fast moving moon fall within the expected mean +/- 2 sd range.

|

Aries |

Taurus |

Gemini |

Cancer |

Leo |

Virgo |

Libra |

Scorpio |

Sag |

Cap |

Aqua |

Pisces |

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

Sun |

2,32 |

2,83 |

2,85 |

1,77 |

2,22 |

-1,18 |

-1,01 |

-5,06 |

-6,06 |

-2,13 |

0,21 |

3,23 |

|

Moon |

1,32 |

-1,02 |

1,00 |

-1,61 |

0,47 |

-1,27 |

-0,76 |

-0,31 |

-0,17 |

0,47 |

1,00 |

0,88 |

|

Mercury |

-2,39 |

-4,45 |

-6,06 |

-6,22 |

-2,75 |

-0,05 |

2,00 |

3,64 |

3,74 |

3,71 |

5,07 |

3,76 |

|

Venus |

4,92 |

3,08 |

-0,61 |

8,43 |

-7,47 |

10,11 |

-10,84 |

-0,13 |

-2,29 |

-10,75 |

8,63 |

-3,09 |

|

Mars |

-9,32 |

-3,94 |

4,38 |

8,34 |

14,03 |

13,91 |

9,33 |

3,31 |

-4,14 |

-10,41 |

-12,46 |

-13,02 |

|

Jupiter |

-6,96 |

-5,60 |

-6,90 |

0,15 |

3,06 |

8,68 |

9,90 |

8,62 |

3,79 |

-2,26 |

-6,39 |

-6,10 |

|

Saturn |

-7,13 |

-5,66 |

-5,22 |

-8,24 |

-0,44 |

2,43 |

3,84 |

5,01 |

8,51 |

6,72 |

3,62 |

-3,44 |

|

Uranus |

9,11 |

5,19 |

25,02 |

8,37 |

-3,95 |

-6,17 |

-7,51 |

-14,10 |

-10,84 |

-6,60 |

-9,26 |

10,74 |

|

Neptune |

-42,15 |

-25,43 |

-15,10 |

8,93 |

42,13 |

56,13 |

73,14 |

29,56 |

-1,52 |

-29,18 |

-47,69 |

-48,81 |

|

Pluto |

-41,89 |

-1,59 |

68,44 |

134,64 |

116,83 |

25,23 |

-20,17 |

-40,68 |

-58,74 |

-63,62 |

-65,47 |

-52,96 |

|

N Node |

2,34 |

1,17 |

4,99 |

3,68 |

0,43 |

0,43 |

0,06 |

-3,43 |

-4,40 |

-4,35 |

-1,55 |

0,63 |

|

Chiron |

72,52 |

45,23 |

1,60 |

-20,22 |

-30,58 |

-41,69 |

-38,39 |

-30,37 |

-25,79 |